GPT-4o vs Claude vs Gemini: Which Should You Build On? (2026)

Claude leads on reasoning, GPT-4o on ecosystem, Gemini on cost. Here's which AI model your business should actually build on in 2026 — with pricing, accuracy, and vendor stability compared.

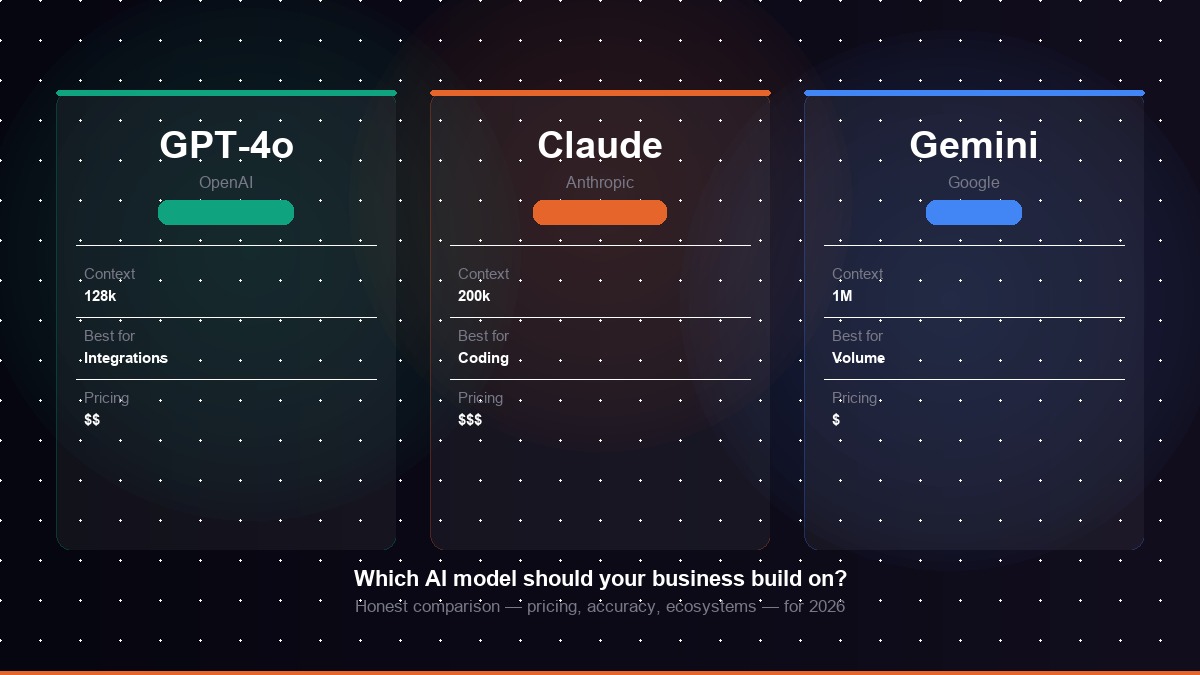

Three AI model families dominate enterprise AI development in 2026: OpenAI's GPT-4o, Anthropic's Claude 3.7 Sonnet, and Google's Gemini 2.0. All three are capable of powering sophisticated business applications. None is universally best. The right choice depends on your use case, budget, technical requirements, and existing infrastructure.

This guide cuts through the marketing language and gives you an honest comparison based on real-world production deployments — with clear verdicts for each type of business application.

Kovil AI · AI Engineering

Not sure which AI model to build on? Our engineers work across GPT-4o, Claude, and Gemini.

GPT-4o: The Enterprise Standard

What it is

GPT-4o is OpenAI's flagship multimodal model, capable of processing and generating text, images, and audio. It is the most widely deployed AI model in enterprise applications, backed by the largest ecosystem of integrations, third-party tools, and developer resources in the industry.

Strengths

Ecosystem breadth. GPT-4o connects to the widest range of third-party tools, platforms, and APIs. If you are using a CRM, marketing platform, or business software, there is almost certainly a GPT-4o integration available.

Reliability and track record. OpenAI's APIs are mature, well-documented, and battle-tested across millions of production applications. For business-critical use cases, the operational reliability is a genuine advantage.

GPT-4o-mini for cost routing. The GPT-4o-mini variant offers dramatically lower pricing ($0.15 input / $0.60 output per million tokens) with quality that is acceptable for simple tasks, making cost-tiered architectures straightforward to implement.

Weaknesses

Coding and reasoning. GPT-4o trails Claude on rigorous coding and multi-step reasoning benchmarks in 2026. For applications where code generation or complex logical inference is central, this gap is meaningful.

Context window. GPT-4o's 128k context window is large but trails Claude (200k) and Gemini 2.0 Flash (1M tokens) for applications requiring very long document processing.

Claude 3.7 Sonnet: The Reasoning Leader

What it is

Anthropic's Claude 3.7 Sonnet is the current leader on reasoning-heavy and coding-intensive tasks. Its extended thinking mode — which allows the model to reason step by step before producing a final answer — significantly improves performance on complex problems where intermediate reasoning matters. Claude models are designed with a strong emphasis on output consistency and safety.

Strengths

Coding performance. Claude consistently ranks first or second on SWE-bench and similar coding benchmarks in 2026. For applications where code generation, review, or debugging is a primary function, Claude is the strongest choice.

Long context handling. Claude's 200k token context window and superior long-context performance make it the right choice for applications processing lengthy contracts, technical documentation, or multi-document research.

Consistency. Claude produces more consistent outputs than GPT-4o on structured tasks — fewer formatting deviations and more reliable adherence to system prompt instructions across a high volume of requests.

Weaknesses

Ecosystem. Anthropic's integration ecosystem is smaller than OpenAI's. Third-party tool support, plugins, and pre-built connectors are less extensive, meaning more custom integration work.

Pricing. Claude 3.7 Sonnet is priced at $3 per million input tokens and $15 per million output tokens — comparable to GPT-4o but without the same cost-tier options at the lower end.

Gemini 2.0: The Cost and Context Leader

What it is

Google's Gemini 2.0 family includes Flash (fast, cheap, 1M context) and Pro (premium quality). Gemini is natively multimodal, designed to process text, images, video, and audio. It is deeply integrated with Google Cloud, Google Workspace, and Google's broader developer infrastructure.

Strengths

Cost efficiency. Gemini 2.0 Flash is priced at approximately $0.10 per million input tokens and $0.40 per million output tokens — the cheapest frontier-tier model in 2026 and a strong default for high-volume, cost-sensitive applications.

Context window. Gemini 2.0 Flash supports up to 1 million tokens of context, making it the only model capable of processing entire large codebases, lengthy legal documents, or multi-hour transcripts in a single prompt.

Google ecosystem integration. If your business runs on Google Workspace, BigQuery, or Google Cloud, Gemini's native integrations significantly reduce integration complexity.

Weaknesses

Reasoning consistency. Gemini 2.0 Pro is competitive but trails Claude on complex reasoning benchmarks. Gemini Flash trades some quality for cost — appropriate for simpler tasks but less suitable for nuanced multi-step reasoning.

Side-by-Side Comparison

| Model | Context | Input Price | Output Price | Best Strength |

|---|---|---|---|---|

| GPT-4o | 128k tokens | $2.50 / 1M | $10.00 / 1M | Ecosystem & integrations |

| GPT-4o-mini | 128k tokens | $0.15 / 1M | $0.60 / 1M | Cost-efficient simple tasks |

| Claude 3.7 Sonnet | 200k tokens | $3.00 / 1M | $15.00 / 1M | Coding & reasoning |

| Claude 3 Haiku | 200k tokens | $0.25 / 1M | $1.25 / 1M | Fast, affordable Claude tier |

| Gemini 2.0 Flash | 1M tokens | $0.10 / 1M | $0.40 / 1M | Cost & context scale |

| Gemini 2.0 Pro | 1M tokens | $1.25 / 1M | $5.00 / 1M | Premium Gemini quality |

Which Model Should Your Business Choose?

The decision comes down to what your application actually does:

Choose Claude if your application does coding assistance, complex document analysis, or structured data extraction where output consistency matters. Claude's reasoning quality and long-context performance justify its price for these workloads.

Choose GPT-4o if your application requires the broadest range of integrations, you need multimodal input handling, or your team wants the most mature ecosystem with the most support resources. GPT-4o is the lowest-risk choice for general-purpose business applications.

Choose Gemini Flash if you are running high-volume, simpler tasks and cost is a primary constraint. Gemini Flash is also the right choice if you process very large documents or are building on Google Cloud infrastructure.

Use multiple models if you have a mix of task types. Route classification, short summaries, and simple Q&A to Gemini Flash or GPT-4o-mini. Route complex reasoning and code generation to Claude. This model routing pattern is one of the most impactful optimisations in production AI systems.

How to Evaluate Models for Your Specific Use Case

Benchmark results are useful orientation but not sufficient for a production decision. The right evaluation process is:

- Define your test set — 50 to 200 representative inputs that reflect real user queries your application will handle.

- Score each model — run all candidate models against your test set and score output quality against labelled ground truth or human evaluation rubrics.

- Measure cost at your expected volume — model pricing is only meaningful in the context of your actual token volumes. Calculate monthly API cost at your projected usage.

- Test latency under load — average response time varies significantly across models and providers, and matters especially for synchronous user-facing applications.

If you want to skip the trial-and-error and get a model recommendation based on your specific use case, our Managed AI Engineer engagement includes an architecture review that covers model selection, API cost modelling, and integration design. Or if you have a defined project, our Outcome-Based AI Project handles model selection as part of the scoping process. Reach out and we can scope it in 48 hours.

Frequently Asked Questions

Which AI model is best for business in 2026?

There is no single best model — it depends on the use case. Claude 3.7 Sonnet is the strongest for coding, complex reasoning, and long-document analysis. GPT-4o is the safest choice for general business applications and teams that need the widest ecosystem of integrations. Gemini 2.0 Flash is the best option for cost-sensitive, high-volume applications. Most production systems in 2026 use at least two models routed by task complexity.

Is Claude better than GPT-4o for coding?

Yes, as of 2026, Claude 3.7 Sonnet consistently outperforms GPT-4o on coding benchmarks including SWE-bench and HumanEval. Claude's extended thinking mode further improves performance on complex multi-step coding problems. For business applications where code generation, debugging, or code review are primary tasks, Claude is the stronger choice.

When should I use Gemini instead of GPT-4o or Claude?

Use Gemini when cost efficiency is a priority — Gemini 2.0 Flash is significantly cheaper than GPT-4o or Claude Sonnet at scale. Also use Gemini when your application requires very long context windows (up to 1 million tokens), when you process video or audio content, or when your stack is deeply integrated with Google Workspace, BigQuery, or other Google Cloud services.

Can I use multiple AI models in the same application?

Yes, and this is increasingly common in production systems. Model routing sends simple queries to cheaper models like Gemini Flash or GPT-4o-mini, reserving more expensive models like Claude Sonnet or GPT-4o for complex tasks. This can reduce inference costs by 40–70% with minimal quality impact on the simple queries.

What is the cheapest AI API for production applications in 2026?

Gemini 2.0 Flash is the cheapest frontier-tier model in 2026, priced at approximately $0.10 per million input tokens and $0.40 per million output tokens. GPT-4o-mini and Claude 3 Haiku are also significantly cheaper than their premium counterparts and are appropriate for high-volume simple tasks. For the cheapest inference at any scale, open-source models like Llama 3 self-hosted on cloud GPUs can reduce costs further but require more infrastructure management.

Kovil AI · AI Engineering

Need help choosing the right AI model for your business?

The wrong model choice can double your inference costs or cut your accuracy in half. Our engineers have built production systems on all three — we'll help you choose and build right.