Why 80% of AI Projects Fail in Production (2026 Guide)

Most AI projects work in demos but fail in production. Here's why, and what separates teams that ship reliable AI from those that don't.

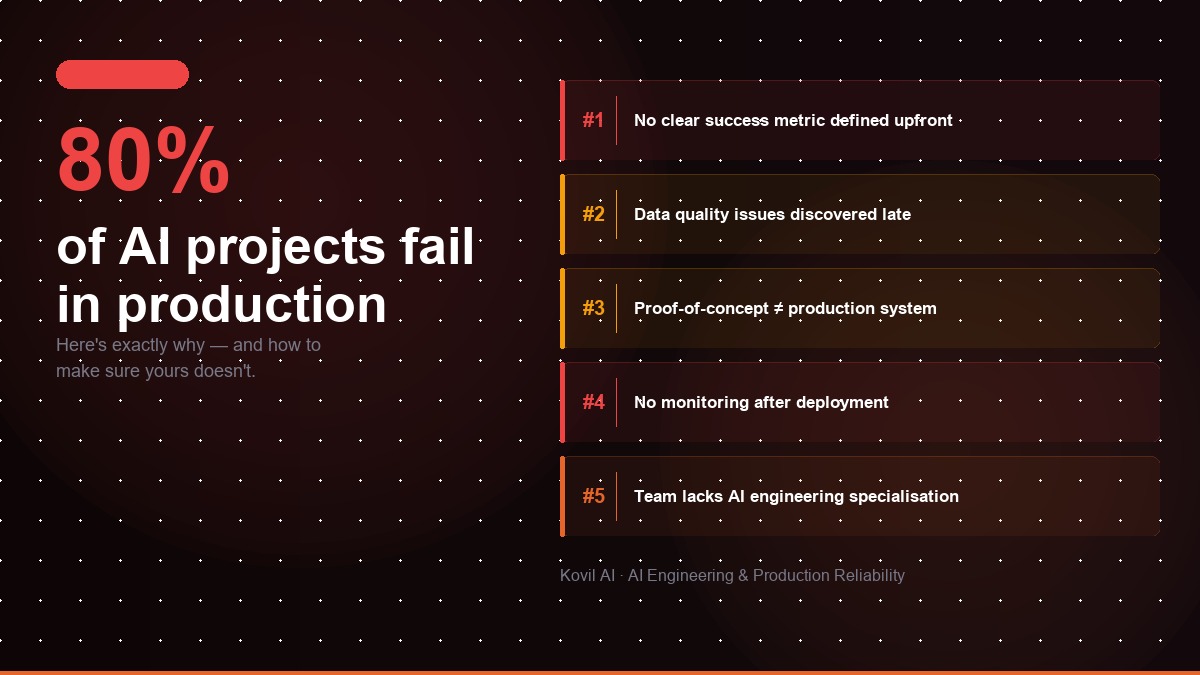

According to Gartner's AI research, roughly 85% of AI projects fail to deliver on their intended business outcomes through 2025. McKinsey Global Institute puts the failure rate closer to 80%. The exact number varies by study, but the direction is consistent: most AI initiatives that reach production either underperform, get quietly shut down, or never make it to real users at all.

AI project failure is defined as any AI initiative that does not achieve its intended business outcome, whether that means never reaching production, underperforming once deployed, or being abandoned after launch due to poor reliability, runaway costs, or low user adoption. Unlike traditional software bugs, AI failure is often gradual and invisible until significant damage has already been done.

Kovil AI · AI Engineering

Our engineers have rescued AI projects from broken proof-of-concept and taken them to production.

This isn't a technology problem. The models are good enough. The frameworks are mature. The compute is accessible. The failure almost always happens in the gap between a working demo and a reliable production system, and that gap is wider and more treacherous than most teams expect.

What Does AI Project Failure Actually Look Like?

AI project failure looks different from traditional software failure. A conventional app either works or it doesn't, the bug is usually deterministic and reproducible. AI failure is messier. It shows up in ways that are easy to miss until real damage is done.

The most common failure modes are not crashes. They are:

- Accuracy degradation over time, the model was accurate at launch but drifts as real-world data diverges from training data.

- Adoption failure, the system works technically but users don't trust it, don't use it, or work around it.

- Cost explosion, the AI works but the API bill is 10x what was budgeted because token usage wasn't modelled at scale.

- Inconsistent outputs, the model gives different answers to the same question, destroying user confidence.

- Silent hallucination, the model confidently outputs wrong information with no guardrails to catch it before it reaches the user.

None of these look like a system crash. All of them will kill an AI product.

Why Do Most AI Projects Work in Demos but Break in Production?

The demo environment is designed for success. You control the inputs, the data is clean, the edge cases are absent, and the person evaluating it is primed to be impressed. Production is designed for chaos. Real users ask unexpected questions, provide malformed inputs, and push the system in directions you never anticipated.

Production data is nothing like development data

Teams build and test their AI on curated, representative examples. Real users bring inconsistent spelling, multiple languages, ambiguous intent, and occasionally malicious inputs. A RAG system that performs well on clean internal documentation will struggle when users ask questions that span multiple documents, use abbreviations not in the corpus, or ask things the documentation simply doesn't cover.

No evaluation framework exists

Software teams have unit tests, integration tests, and CI/CD pipelines. Most AI teams have manual spot-checking. There is no automated system to catch when a prompt change degrades output quality, when retrieval accuracy drops, or when the model starts hallucinating in a specific category of query. Without evals, regressions are invisible until a user complains.

Latency is an afterthought

A response in 8 seconds feels acceptable when you're a developer impressed by the output quality. It feels broken when you're a customer service agent handling 50 simultaneous conversations. AI inference latency is rarely stress-tested properly until it becomes a support ticket.

Error handling is missing

What happens when the LLM API returns a rate limit error? What happens when the vector database returns zero results? What happens when the model returns a response that fails your output schema? Most demo implementations surface an uncaught exception. Production systems need graceful fallbacks for every one of these cases.

What Are the Most Common AI Production Failure Modes?

Based on production deployments across fintech, SaaS, and healthcare, the failure modes that appear most consistently are:

1. Hallucination without guardrails

The model fabricates information confidently and there is no validation layer to catch it before it reaches the user. This is especially damaging in legal, medical, or financial domains where a wrong answer carries real consequences.

2. RAG retrieval quality degrading over time

The knowledge base grows or changes, embeddings become stale, and chunk sizes that worked at launch stop working when the document count triples. Retrieval-augmented systems require ongoing maintenance, they are not set-and-forget infrastructure.

3. Context window overflow

As conversation history grows or retrieved documents increase in number, the context window fills up. Teams that don't implement context management hit token limits mid-conversation, causing truncation or errors that confuse users and erode trust.

4. Cost overruns at scale

A system that calls a large model for every request, with a large context window and no caching strategy, can accumulate a five-figure monthly API bill when traffic increases 10x. Teams that don't model inference costs during development encounter an unpleasant surprise during their first high-traffic period.

5. Prompt injection vulnerabilities

Malicious users discover they can manipulate system behaviour by crafting specific inputs designed to override the system prompt. Customer-facing AI systems without injection guards are a security liability, not just a reliability concern.

| Failure Mode | Root Cause | Fix |

|---|---|---|

| Hallucination without guardrails | No output validation layer | Add schema validation + human review for high-stakes outputs |

| RAG retrieval degrading over time | Stale embeddings, poor chunking strategy | Schedule re-embedding pipeline, tune chunk size per doc type |

| Context window overflow | No conversation or context management | Implement sliding window or conversation summarisation |

| Cost overruns at scale | No token budgeting or caching strategy | Add token counting, caching layer, model routing for simple queries |

| Prompt injection vulnerability | No input sanitisation or output enforcement | Add input guards, output schema enforcement, adversarial testing |

What Do the Successful 20% Do Differently?

The AI projects that succeed in production share a set of practices that are visible before launch:

They treat AI like a product, not a prototype. The moment a demo works, they shift to asking: what does monitoring look like? How do we handle edge cases? What is the rollback plan? What does version control for prompts look like?

They build evaluation pipelines before they ship. Before any change reaches production, a prompt edit, a new retrieval strategy, a model upgrade, it runs through an automated eval suite that scores output quality against labelled test cases. Regressions get caught before users see them.

They design for failure. Every external dependency has a timeout, a retry strategy, and a graceful degradation path. The system works in a degraded mode rather than failing completely when an upstream service has an outage.

They monitor in production. Latency per request, token usage per session, retrieval hit rate, user satisfaction signals, all tracked and alertable. When something starts drifting, the team knows before the user does.

They staff with engineers who have shipped AI before. The gap between an engineer who has built AI demos and one who has shipped reliable AI systems in production is significant. Production AI requires experience with evals, prompt versioning, RAG architecture, cost optimisation, and LLM-specific failure modes that don't appear in most engineering curricula. If your team lacks this depth, a Managed AI Engineer embedded in your team can fill that gap without the cost of a full-time senior hire. For scoped delivery, an Outcome-Based AI Project gives you a fixed deliverable with clear success criteria.

When Should You Bring in Outside AI Engineering Expertise?

There are clear signals that a production AI problem is beyond the current team's experience:

- Your AI demo works reliably but the production version gives inconsistent outputs.

- Your LLM API bill is higher than expected and you're not sure why.

- Users have stopped trusting the AI output and are manually working around it.

- Your RAG system was accurate at launch but quality has degraded over the past few months.

- The team that built the AI feature has moved on, and no one knows how to maintain or improve it.

These are not signs of a bad team. They are signs of a specialisation gap. Production AI reliability is a specific discipline, and most product engineering teams are not staffed for it.

Kovil AI's AI Reliability & App Rescue service is built for exactly this situation. Our engineers audit your current system, identify the failure modes, and fix them, with clear milestones so you know what you're getting before we start. If your AI app is underperforming in production, get in touch and we'll diagnose what's wrong within 48 hours.

Frequently Asked Questions

What is AI project failure?

AI project failure is any AI initiative that does not achieve its intended business outcome, whether that means never reaching production, underperforming once deployed, or being abandoned after launch due to poor reliability, runaway costs, or low user adoption. Unlike traditional software bugs, AI failure is often gradual and invisible until significant damage has been done.

What percentage of AI projects fail?

According to Gartner, approximately 85% of AI projects fail to deliver on their intended business outcomes. McKinsey estimates the failure rate at around 80%. The consistent finding across research is that the majority of AI initiatives either underperform, get shut down, or never reach real users.

Why do most AI projects fail in production?

Most AI projects fail in production because they are built and tested in controlled demo environments that don't reflect real-world conditions. Key reasons include: production data being far messier than development data, no automated evaluation framework to catch regressions, missing error handling for LLM API failures, and teams that lack experience shipping AI in production rather than just building demos.

What is the most common AI production failure mode?

The most common AI production failure modes are hallucination without guardrails (the model outputs wrong information confidently with no validation layer), RAG retrieval quality degrading over time as the knowledge base grows, and cost overruns when token usage wasn't properly modelled at scale.

How do you prevent AI project failure?

To prevent AI project failure: build automated evaluation pipelines before shipping, design explicit error handling and fallback paths for every external dependency, monitor latency and output quality in production from day one, and staff the project with engineers who have previously shipped AI systems in production, not just built demos.

When should a company bring in outside AI engineering expertise?

Bring in outside AI engineering expertise when your AI demo works but the production version gives inconsistent outputs, when your LLM API bill is unexpectedly high, when users have stopped trusting the AI output and are working around it manually, or when the team that originally built the AI feature has moved on and no one knows how to maintain it.

Kovil AI · AI Engineering

Don't let your AI project become another statistic.

The failure points are predictable — and avoidable. Our engineers have rescued AI projects from broken proof-of-concept and taken them to production. We know what it takes.