The Hidden Cost of Unmaintained Software (2026 Guide)

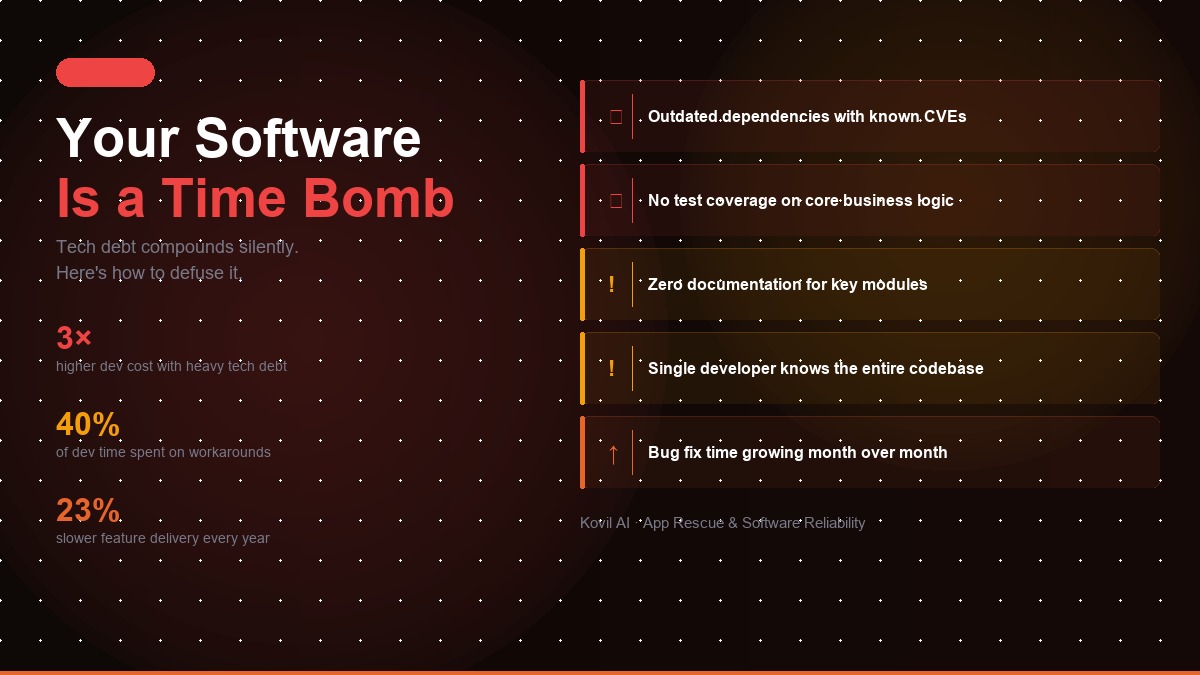

Ignoring your codebase after launch is the most expensive mistake a growing company can make. Here's the data, the warning signs, and what to do about it.

There's a moment that happens in most growing companies. The product shipped. Users are on board. Revenue is coming in. The founding team, exhausted from the build, turns their attention to sales, marketing, and fundraising. The codebase, the engine under the hood of the business, gets quietly moved to the back burner.

For a few months, nothing visible happens. Then the calls start coming in.

Kovil AI · App Rescue

Struggling with tech debt or an unmaintained codebase? Our App Rescue service can help.

A payment flow breaks. A key integration stops working after a third-party API update. A security vulnerability is found. A performance issue under new load causes timeouts. A developer leaves, and nobody else understands the part of the system they built.

The codebase was always accumulating risk. You just couldn't see it from the sales floor.

The Hidden Cost of Deferred Maintenance

Technical debt is the term developers use for the accumulated cost of shortcuts taken, decisions deferred, and maintenance skipped. It compounds exactly like financial debt, the longer you ignore it, the more expensive it becomes to address.

The numbers are significant. Research from McKinsey found that for the average large company, 20-40% of technology investments are consumed by technical debt annually. For smaller companies, the ratio is often worse, because the codebase is less structured, documentation is sparse, and there are fewer developers who understand the full system.

But the real cost of an unmaintained codebase isn't just developer time, it's business impact. These same patterns compound the risks we cover in our post on why AI projects fail in production — the codebase problems that kill new AI features are usually maintenance problems that were ignored months earlier.

- Downtime costs money. For e-commerce companies, every hour of downtime is lost revenue. For SaaS businesses, outages trigger SLA breaches, support escalations, and churn.

- Bugs erode trust. A user who encounters a broken flow once might forgive it. A user who encounters it twice is evaluating your competitors.

- Security vulnerabilities create existential risk. A single data breach in 2026 costs an average of $4.88 million in damages, remediation, regulatory fines, and reputational harm, according to IBM's Cost of a Data Breach report.

- Developer velocity degrades. As technical debt accumulates, every new feature takes longer to build because developers spend more time working around existing messiness. What took a day in year one takes a week in year three.

The Compounding Effect of Dependency Drift

One of the most underappreciated maintenance risks is dependency drift, the accumulated divergence between the versions of libraries and frameworks your application depends on and the current supported versions.

Every dependency in your application stack is a surface area for security vulnerabilities. When a critical vulnerability is discovered in a widely-used package, a patch is released. Companies with current dependencies can apply it in minutes. Companies running outdated stacks face a much harder problem: upgrading the vulnerable package may require upgrading five other packages, which may require refactoring sections of code, which may introduce new bugs.

This is why companies that defer dependency updates end up in a position where upgrading anything is a months-long project. The bill for years of deferred maintenance comes due all at once.

Staying current with dependencies isn't about chasing the latest version for its own sake. It's about ensuring that when a critical security patch is needed, you can apply it in hours, not weeks.

What Actually Breaks in an Unmaintained Codebase

If you've been running without active maintenance, here's a realistic picture of what's accumulating:

API deprecations and breaking changes

Third-party APIs, payment processors, communication tools, data providers, identity services, evolve constantly. When an external API version is deprecated, integrations that haven't been updated break. This is one of the most common causes of sudden, unexpected failures in production, and it's entirely preventable with proactive monitoring.

Security vulnerabilities in dependencies

The National Vulnerability Database adds thousands of new vulnerability entries annually. Any given application stack runs on dozens of open-source dependencies, each of which may have known vulnerabilities. Running npm audit or pip check on a long-neglected project typically reveals dozens to hundreds of vulnerability warnings.

Performance degradation under scale

Database queries that worked fine with 10,000 records struggle with 1 million. Infrastructure provisioned for early-stage traffic doesn't scale smoothly as the business grows. Without proactive monitoring and optimisation, performance degradation is often invisible until it causes user-facing problems.

Documentation decay

Codebases grow. Original developers move on. The person who understands why a critical section of code was written a certain way leaves the company, and that knowledge leaves with them. Within 18 months of a codebase being written, the people who wrote it are often working elsewhere, and the implicit knowledge that kept the system running safely exists nowhere.

Infrastructure drift

Cloud infrastructure evolves quickly. Hosting providers deprecate services, change pricing, and retire runtime environments. Companies that haven't been actively managing their infrastructure often discover, at the worst possible moment, that their deployment environment is no longer supported.

The Three Stages of Codebase Neglect

We've seen the same pattern across dozens of companies:

Stage 1 (0-12 months post-launch): Stable. The code works. Issues are rare. Developers who built it are still around. The system is understood.

Stage 2 (12-24 months): Degrading. Dependencies drift. A few integrations break. Bugs accumulate. The original developers have moved on. New features take longer than expected. The codebase is starting to be understood by nobody.

Stage 3 (24+ months): Crisis mode. A major incident occurs. Either a security breach, a catastrophic bug, or a critical dependency failure forces emergency action. The cost of remediation is 5-10x what proactive maintenance would have been.

The cruel irony is that the period of apparent stability in Stage 1 creates a false sense of security. The codebase isn't fine, it's just not visibly broken yet. If you're in Stage 3, the right move is an App Rescue engagement — a structured stabilisation sprint before the next feature build begins. If you're still in Stage 2, a Managed AI Engineer retainer keeps you from ever reaching Stage 3.

What Good Maintenance Actually Looks Like

Proactive codebase maintenance isn't just fixing bugs when they appear. It's a structured, ongoing practice that keeps the codebase healthy, secure, and moving forward. Here's what it covers:

Bug triage and resolution

All bugs are not created equal. Critical issues, failures that affect revenue, security, or significant numbers of users, need to be resolved within hours. Standard bugs need clear SLA targets, typically 48-72 hours. A well-run maintenance operation has defined priorities and demonstrably meets them.

Dependency management

Regular dependency audits, automated vulnerability scanning, and a clear upgrade cadence keep the security surface area manageable. Monthly dependency reviews and quarterly upgrade sprints are a reasonable baseline for most applications.

Performance monitoring and optimisation

Proactive monitoring catches performance degradation before users notice. Response time tracking, database query analysis, error rate monitoring, these give you visibility into how the system is behaving under real conditions and flag problems before they escalate.

Technical debt reduction

Every quarter, some portion of maintenance capacity should go toward reducing accumulated technical debt. Refactoring poorly-structured code, improving test coverage, removing deprecated patterns, this keeps the system progressively easier to work in, rather than progressively harder.

Feature updates

Most running products have a steady stream of small improvements, enhanced reporting, new integration points, UI refinements, configuration options users have requested. A maintenance retainer typically covers these alongside reactive bug fixes.

Quarterly roadmap planning

The best maintenance relationships include a quarterly planning touchpoint: reviewing the technical health of the system, prioritising the technical debt backlog, and planning upcoming feature work. This keeps maintenance aligned with business goals rather than purely reactive.

The "We'll Handle It In-House" Problem

Many companies attempt to handle maintenance with an internal developer who has maintenance as a part of their role. This works until it doesn't, usually around the time that developer leaves, or when a major incident demands more bandwidth than one person has.

The structural problem with single-person maintenance is bus factor: if one person is the only one who understands the system, the organisation is one resignation away from a crisis. This isn't a reflection on the developer, it's a structural risk that needs to be addressed through redundancy and documentation.

External maintenance partners bring a different model: multiple developers who understand the system, defined SLAs with accountability, and a process designed specifically for ongoing support rather than net-new development.

Signs You Need a Maintenance Partner Now

You should be seriously evaluating a maintenance arrangement if:

- Your last significant codebase update was more than six months ago

- The developer who built your product no longer works there, or spends most of their time on new development

- You've had more than two production incidents in the last six months

- Running

npm auditor a dependency check produces dozens of warnings - You can't clearly state who is responsible for responding to a production incident

- You don't have defined response time expectations for bug reports

- You've deferred known technical debt for more than a year

Any one of these is a yellow flag. More than two is a red one.

The Real Numbers: What Software Neglect Actually Costs

The business case for proactive maintenance becomes clearer when you put concrete numbers against it:

- Technical debt consumes 20-40% of IT budgets annually at the average large company, according to McKinsey — budget that produces zero new capability, it just services existing mess.

- The average cost of a data breach in 2026 is $4.88 million (IBM Cost of a Data Breach Report, 2024), most of which is attributable to vulnerabilities that existed in the codebase for months or years before exploitation.

- Emergency incident response costs 5-10x more than prevention. A critical bug that takes a two-engineer week to fix reactively would typically have taken a half-day to prevent with proactive monitoring.

- Developer velocity declines 30-40% in neglected codebases — meaning new features that should take two weeks take three, compounding across every sprint for the life of the product.

- Dependency upgrade projects balloon in neglected stacks. A single security patch that should take hours can require weeks of refactoring when dependencies haven't been touched in 18 months.

Before and After: Proactive vs. Reactive Maintenance

| Scenario | Reactive (No Maintenance) | Proactive (Retainer) |

|---|---|---|

| Security vulnerability found | 2-6 weeks to patch (dependency chain); potential breach exposure | Patched within hours; already on current dependencies |

| Third-party API deprecated | Integration breaks in production; emergency dev sprint required | Migration planned ahead of deprecation date |

| Performance degrades under new load | Users notice first; root cause investigation under pressure | Caught in monitoring before user impact |

| Key developer leaves | Knowledge gap; system partially understood by no one | Documentation current; multiple engineers familiar with system |

| New feature request | Delayed by technical debt; 2x estimated dev time | Clean foundation; delivered on schedule |

| Annual cost | Unpredictable; $50K–$500K+ in incident costs | Predictable retainer; fraction of incident cost |

The ROI of Proactive Maintenance

The question isn't whether you'll pay to deal with your codebase, it's whether you'll pay on your terms or in a crisis.

A maintenance retainer typically costs a fraction of what a single major incident costs to remediate. When we've helped companies recover from production crises, breaches, complete service failures, catastrophic bugs, the emergency remediation cost almost always exceeds a full year of proactive maintenance that would have prevented the crisis entirely.

Beyond cost avoidance, there's an upside case: a codebase that's actively maintained moves faster. New features take less time to build. Developers spend less time fighting the existing system. The business moves at the speed the market demands, rather than the speed the technical debt allows.

The Bottom Line

The codebase running your business is not a static asset. It's a living system in a constantly changing environment, changing dependencies, changing APIs, changing security threats, changing user loads. Treating it as something that doesn't need ongoing attention is the equivalent of never servicing a car engine and being surprised when it fails.

The question isn't whether your unmaintained codebase will cause problems. It's when, and how much it will cost when it does. Proactive maintenance isn't a nice-to-have. It's the difference between a crisis that disrupts your business and a minor issue resolved before your users notice it. If your codebase is already showing signs of neglect, AI Reliability & App Rescue is built for exactly this situation.

Frequently Asked Questions

What is technical debt and why does it matter?

Technical debt is the accumulated cost of shortcuts taken, decisions deferred, and maintenance skipped during software development. Like financial debt, it compounds over time, the longer it goes unaddressed, the more expensive it becomes to fix. Research from McKinsey found that 20-40% of the technology investment at a typical large company is consumed by technical debt annually, reducing velocity and increasing the risk of failures.

How much does a software production incident cost compared to proactive maintenance?

A single data breach in 2026 costs an average of $4.88 million in damages, remediation, regulatory fines, and reputational harm, according to IBM's Cost of a Data Breach report. Emergency remediation of major production crises almost always exceeds a full year of proactive maintenance that would have prevented the incident. A maintenance retainer typically costs a small fraction of what a single major incident costs to resolve.

What are the warning signs that a codebase needs urgent maintenance?

Key warning signs include: your last significant codebase update was more than six months ago; the developer who built the product no longer works there; you've had more than two production incidents in the last six months; running npm audit or a dependency check produces dozens of warnings; no one can clearly state who is responsible for responding to a production incident; and you have deferred known technical debt for more than a year. Any two of these together is a red flag requiring immediate action.

How often should software dependencies be updated?

Monthly dependency audits combined with quarterly upgrade sprints are a reasonable baseline for most applications. The goal is not to chase the latest version for its own sake, it is to ensure that when a critical security patch is released, you can apply it in hours rather than weeks. Companies that defer dependency updates often discover that upgrading one vulnerable package requires upgrading five others, turning a one-hour fix into a months-long project.

What does a software maintenance retainer typically include?

A well-structured maintenance retainer covers: bug triage and resolution (with defined SLA targets by severity); dependency management and vulnerability scanning; performance monitoring and optimisation; technical debt reduction (typically 20-25% of retainer capacity per quarter); small feature updates and integration maintenance; and quarterly roadmap planning sessions to align technical health with business priorities. The goal is both reactive coverage and proactive improvement.

Kovil AI · App Rescue

Is your software becoming a liability?

Outdated dependencies, missing tests, mounting tech debt — we've seen it all. Our App Rescue service gets your codebase back to a stable, maintainable state before it becomes a crisis.