How to Build an LLM-Powered Chatbot for Your Business (2026 Guide)

Step-by-step guide to building a production-ready AI chatbot using OpenAI or Claude APIs, with RAG architecture, LLM comparison, and real deployment costs.

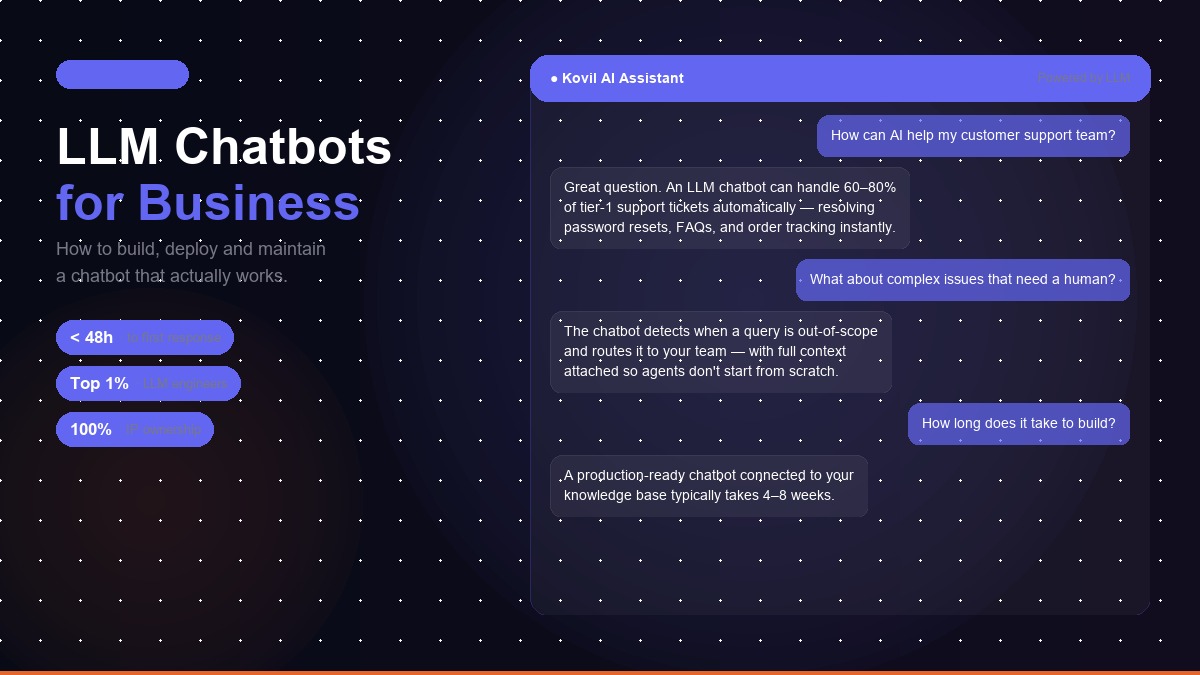

Building an LLM-powered chatbot for your business is one of the highest-ROI AI integrations available today. Done well, it reduces support load, answers questions instantly at any hour, and creates a more responsive experience for customers and employees alike. Done poorly, it creates a system that confidently gives wrong answers and erodes user trust faster than no chatbot at all.

This guide walks through the complete process, from architecture decisions to deployment, with a focus on building something that works reliably in production, not just in demos.

Kovil AI · AI Chatbot Build

We build LLM chatbots connected to your data and deployed in your stack — not generic wrappers.

Rule-Based vs LLM-Powered: What's the Difference?

Before getting into the how, it's worth being clear on what makes an LLM-powered chatbot different from the rule-based chatbots that most businesses have encountered.

Rule-based chatbots follow pre-defined decision trees. User says X → bot says Y. These work well for highly structured, predictable queries with limited variation. They break immediately when a user says something the tree wasn't built for.

LLM-powered chatbots understand natural language in context. A user can ask the same question five different ways, include spelling errors, add irrelevant context, or phrase it as a statement rather than a question, and the model understands the intent. They're also capable of multi-turn conversations that maintain context across exchanges.

The tradeoff: LLM-based systems are more capable but require more careful design to prevent hallucination, the tendency of language models to confidently generate plausible-sounding but incorrect information. The architecture choices we cover below are primarily about managing this risk.

Best LLM for Business Chatbots in 2026

In 2026, the leading commercial options for business chatbot applications are OpenAI's GPT-4o and Anthropic's Claude 3.5/3.7. Both are production-capable. The right choice depends on your specific use case. Before diving into the architecture, it's worth understanding how LLM-powered chatbots differ from AI agents — see our comparison of AI agents vs AI chatbots if you're unsure which approach your use case needs.

| Model | Speed | Cost (per 1M tokens) | Best For |

|---|---|---|---|

| GPT-4o (OpenAI) | Fast | $2.50 input / $10 output | Structured tasks, JSON output, internal tools, code generation |

| Claude 3.7 Sonnet (Anthropic) | Fast | $3 input / $15 output | Customer-facing support, nuanced reasoning, long-document analysis, compliance-sensitive contexts |

| GPT-4o mini (OpenAI) | Very fast | $0.15 input / $0.60 output | High-volume, cost-sensitive chatbots with well-defined scope |

| Llama 3.1 70B (self-hosted) | Variable | Infrastructure cost only (~$0.001–0.003/1K tokens) | Data-sensitive use cases requiring on-premise deployment |

| Gemini 1.5 Pro (Google) | Fast | $1.25 input / $5 output | Google Workspace integrations, multimodal inputs (text + image) |

Our recommendation for most business chatbots: Start with GPT-4o for internal tools and structured workflows. Use Claude 3.7 Sonnet for customer-facing support where nuanced, policy-grounded responses matter. Switch to GPT-4o mini for high-volume tiers once the chatbot is validated in production.

The Critical Architecture Decision: RAG vs Fine-Tuning

The most important architectural decision in building a business chatbot is how you give the model knowledge of your business. There are two primary approaches:

Fine-tuning

Fine-tuning means training a model further on your proprietary data, product information, support conversations, company documentation. The result is a model that has internalized your domain knowledge.

Fine-tuning sounds appealing but is rarely the right choice for business chatbots. It's expensive, time-consuming, and, critically, it bakes knowledge into the model weights. When your information changes (prices update, policies evolve, new products launch), you need to fine-tune again. For most businesses, the cost and complexity outweighs the benefit.

Retrieval-Augmented Generation (RAG)

RAG is the architecture used in almost every successful production business chatbot. Instead of baking knowledge into the model, you keep it in a searchable knowledge base. At query time, you retrieve the most relevant chunks of information and include them in the model's context window, alongside the user's question and your system prompt.

The model then generates a response grounded in the retrieved information, rather than relying on its training data. This dramatically reduces hallucination because you're asking the model to reason about specific text you've provided, not to recall facts from training.

RAG also solves the freshness problem: when your information changes, you update the knowledge base, not the model. New products, updated policies, revised FAQs, all of these can be reflected in chatbot responses immediately after the knowledge base is updated.

For the vast majority of business chatbots, RAG is the right architecture. The rest of this guide assumes RAG. For a broader view of where chatbot development fits in the full project lifecycle, our AI development lifecycle guide covers each phase from problem definition through to production monitoring.

The Technical Stack

A production RAG chatbot has four main components:

1. Knowledge Base (Vector Database)

Your information, documents, FAQs, product pages, policy documents, is processed into "chunks" of text, converted into vector embeddings (numerical representations of semantic meaning), and stored in a vector database. When a user asks a question, the query is also converted to an embedding, and the most semantically similar chunks are retrieved.

Recommended vector databases: Pinecone for fully managed with no infrastructure overhead; Weaviate or Qdrant for self-hosted with more control; pgvector (Postgres extension) if you want to keep everything in your existing database.

2. Embedding Model

Embedding models convert text to vectors. OpenAI's text-embedding-3-large is the current standard for high-quality embeddings. For cost-sensitive applications, text-embedding-3-small offers good performance at lower cost. Both can be accessed via the OpenAI API.

3. Orchestration Layer

The orchestration layer connects all components and handles the query pipeline: receive user message → retrieve relevant chunks → build prompt → call LLM → return response. This is typically built with:

- LangChain: The most widely-used framework for RAG applications. Extensive tooling, good documentation, active community.

- LlamaIndex: Particularly strong for document-heavy RAG applications with complex retrieval requirements.

- Custom implementation: For teams comfortable with the underlying APIs, building directly against the OpenAI/Anthropic and vector DB APIs gives more control and is often more performant.

4. Chat Interface

The front-end component where users interact. This might be a widget on your website, an embedded component in your application, a Slack or Teams integration, or a standalone web application. The implementation varies significantly by platform, but the core API calls are the same.

Step-by-Step: Building Your Chatbot

Step 1: Define the scope precisely

What questions should this chatbot answer? What should it refuse to answer? What should it do when it doesn't know the answer?

These decisions shape everything. A chatbot without clear scope boundaries will answer questions it shouldn't, hallucinate information it doesn't have, and create support problems rather than solving them.

Write down: the specific use cases this chatbot should handle, the information sources it should use, the response format it should follow, the fallback behaviour when it can't answer (escalate to human, provide contact information, acknowledge uncertainty clearly).

Step 2: Prepare and structure your knowledge base

This step is where most teams underinvest and where most chatbot failures originate. The quality of your knowledge base determines the quality of your chatbot's answers.

Gather your source documents: product documentation, FAQs, support articles, policy documents, pricing information. Review each for accuracy and freshness, stale or incorrect information in your knowledge base produces incorrect chatbot responses.

Chunk the documents appropriately. Chunks that are too long dilute relevance; chunks that are too short lose context. For most business content, 300-600 token chunks with 50-100 token overlaps is a reasonable starting point. Add metadata (source URL, document type, last updated date) to each chunk, this improves retrieval quality and allows for source citation in responses.

Step 3: Build the retrieval pipeline

Embed all chunks using your chosen embedding model. Load them into your vector database. Build a retrieval function that takes a query, embeds it, retrieves the top K most similar chunks (typically 3-8), and returns them with metadata.

Test retrieval quality with 20-30 sample queries. For each query, verify that the retrieved chunks are genuinely relevant. Poor retrieval is the most common cause of poor chatbot performance, fix retrieval problems before moving to generation.

Step 4: Write and iterate your system prompt

The system prompt is the set of instructions you give the model. This is where you define its persona, its constraints, and its behaviour. A good system prompt for a business chatbot:

- Defines the role clearly: "You are a customer support assistant for [Company]. Your job is to help customers with questions about our products and services."

- Specifies the information source: "Answer questions only based on the information provided in the context below. Do not use information from outside the provided context."

- Handles uncertainty explicitly: "If you don't know the answer or the context doesn't contain the information needed, say so clearly and suggest contacting support directly."

- Sets the response format: tone, length, structure.

- Defines out-of-scope behaviour: "Do not answer questions unrelated to [Company] products and services."

System prompt quality matters enormously and requires iteration. Plan for 5-10 rounds of refinement based on real test cases before going to production.

Step 5: Build the orchestration

Connect the pieces: user message → embed query → retrieve chunks → build prompt (system prompt + retrieved context + conversation history + user message) → call LLM → return response.

Handle conversation history appropriately, include enough previous turns to maintain context (typically the last 4-6 exchanges), but not so many that the context window overflows or costs escalate.

Add error handling at every step. What happens if the vector DB is unavailable? If the LLM API times out? If the response doesn't meet format requirements? Production chatbots need graceful degradation, not silent failures.

Step 6: Implement guardrails

Guardrails are checks that catch problematic outputs before they reach users. At minimum:

- Input filtering: Block or redirect obviously off-topic, harmful, or adversarial inputs.

- Output validation: Check that responses meet format requirements and don't contain obviously problematic content.

- Confidence signals: When retrieval quality is low (no highly similar chunks found), signal uncertainty explicitly rather than generating a confident-sounding response from poor context.

- Rate limiting: Prevent abuse and runaway API costs.

Step 7: Test rigorously

Testing a chatbot is different from testing conventional applications. Build a test suite of 50-100 queries covering: typical questions, edge cases, questions the bot should refuse, tricky phrasing, and adversarial inputs. Run the full pipeline on each and evaluate the responses.

Evaluation criteria: accuracy (is the answer correct?), groundedness (is it based on the retrieved context, or is the model hallucinating?), relevance (does it address the actual question?), tone (is it appropriate for your brand?), and safety (does it handle sensitive questions appropriately?).

Automated evaluation with an LLM judge (using another model to assess response quality) is increasingly standard practice and dramatically speeds up the iteration cycle.

Step 8: Deploy with monitoring

Production deployment needs: logging of all queries and responses (for quality review and debugging), latency tracking, error rate monitoring, API cost tracking, and a feedback mechanism (thumbs up/down at minimum) for users to signal response quality.

Plan for ongoing monitoring, the first week of production deployment almost always surfaces edge cases your test suite didn't cover. Have a process for reviewing flagged conversations and updating the knowledge base or prompt accordingly.

Common Mistakes and How to Avoid Them

Skipping knowledge base quality review. The most common cause of poor chatbot performance. Every document in your knowledge base should be reviewed for accuracy before it's indexed.

Overpromising the scope. A chatbot that claims to answer "anything about our products" and then fails on routine questions damages trust. Start narrow, do it well, and expand.

Not handling "I don't know" correctly. LLMs will attempt to answer questions they don't have information for. Explicit instructions to acknowledge uncertainty, combined with low-similarity retrieval as a confidence signal, dramatically reduces hallucination.

Ignoring latency. LLM API calls typically take 2-5 seconds. For user-facing applications, this feels slow. Implement streaming responses (which start displaying as the model generates) and optimise retrieval steps. Target < 2 seconds to first token.

No escalation path. Every chatbot needs a clear handoff to a human for cases it can't handle. Unclear escalation paths result in users frustrated by a system that loops rather than resolves.

What Does It Cost?

The infrastructure cost of running a business chatbot is genuinely low. At typical usage levels:

- LLM API costs: $0.002–0.015 per conversation turn depending on model and message length. At 1,000 conversations/month, that's $20–150/month.

- Vector database: Pinecone Starter is free to $70/month. Self-hosted Qdrant is effectively free beyond infrastructure.

- Infrastructure: The application server running the orchestration layer costs $20–100/month depending on traffic.

Total operational cost for most business chatbots: $50–500/month. The build cost, scoping, development, testing, deployment, is typically $15,000–40,000 for a well-executed production system.

The Bottom Line

Building an LLM-powered chatbot that works reliably in production requires careful architecture, rigorous testing, and ongoing maintenance. The companies that do it well get a highly capable system that handles a significant percentage of inbound queries automatically, at near-zero marginal cost, with response times no human team can match.

The companies that do it poorly get a system that confidently gives wrong answers, and discover, expensively, that a bad chatbot is worse than no chatbot at all.

If you're building a chatbot for a customer-facing use case or a high-stakes internal application, the investment in doing it properly, clean knowledge base, careful prompt engineering, thorough testing, robust monitoring, is the difference between a tool that becomes a competitive advantage and one that becomes a support liability. If you need a vetted AI engineer to build it right, that's what we do.

Real Example: Support Chatbot, 68% Ticket Deflection in 60 Days

Kovil Case Study

A B2B SaaS company handling ~800 support tickets per month needed to reduce first-response time from 4–6 hours to under 2 minutes, without adding headcount. Their existing documentation was extensive but poorly structured and siloed across Notion, Confluence, and Google Drive.

Architecture: RAG pipeline with Claude 3.7 Sonnet, Pinecone vector database, a Python/FastAPI orchestration layer, and a Intercom-embedded chat widget. The knowledge base was built by ingesting and re-chunking 340+ documentation pages, FAQs, and product changelog entries.

System prompt focus: The chatbot was scoped strictly to product questions, billing inquiries, and integration troubleshooting. Anything outside that scope was explicitly acknowledged and routed to the support team with a pre-populated context summary so agents weren't starting from zero.

Results at 60 days:

- 68% ticket deflection rate — more than two-thirds of incoming queries resolved without human involvement

- Average first response: 8 seconds vs 4–6 hours previously

- CSAT score: 4.3/5 for chatbot-resolved conversations vs 4.1/5 for human-resolved

- Support team capacity freed: approximately 22 hours per week, redirected to complex enterprise account issues

The knowledge base quality was the decisive factor. The first two weeks of the project were spent cleaning, restructuring, and validating documentation before a single line of chatbot code was written. Teams that skip this step consistently get worse results.

Frequently Asked Questions

What is an LLM-powered chatbot and how is it different from a rule-based chatbot?

A rule-based chatbot follows pre-defined decision trees, if the user says X, the bot replies Y. It breaks immediately when users phrase things unexpectedly. An LLM-powered chatbot uses a large language model to understand natural language in context, handle multi-turn conversations, and respond to questions phrased in any number of ways. The tradeoff is that LLM systems require more careful design to prevent hallucination, the tendency to generate plausible-sounding but incorrect answers.

What is RAG and why is it better than fine-tuning for business chatbots?

RAG (Retrieval-Augmented Generation) keeps your business knowledge in a searchable database and retrieves the most relevant information at query time, passing it to the LLM as context. Fine-tuning bakes knowledge into the model weights. RAG is preferred for business chatbots because: it's far cheaper and faster to implement; when your information changes (prices, policies, products), you update the knowledge base rather than retraining the model; and it dramatically reduces hallucination by grounding the model in specific retrieved text rather than relying on memorized training data.

Which LLM should I use for my business chatbot, GPT-4o or Claude?

Both are production-capable. GPT-4o excels at structured tasks, code generation, and JSON output, it's faster and slightly cheaper, with the broadest developer ecosystem. Claude performs better on tasks requiring careful reasoning about nuanced information, long-document analysis, and following complex instructions reliably; it also tends to be more cautious about generating misleading content, which matters for customer-facing applications. For most internal tools with structured data, GPT-4o is the better choice. For customer-facing support with complex policies, Claude has a slight edge.

How do I prevent my AI chatbot from hallucinating?

The most effective approach is RAG architecture, grounding every response in retrieved documents from your knowledge base rather than the model's training data. Beyond that: use a well-crafted system prompt that instructs the model to say 'I don't know' when the answer isn't in the provided context (rather than guessing); implement confidence thresholds that escalate to a human agent when uncertainty is high; and continuously monitor production conversations to identify and correct patterns of incorrect responses.

How much does it cost to build and run an LLM-powered chatbot?

Build costs for a production RAG chatbot typically range from $25,000–$75,000 depending on complexity, knowledge base size, and interface requirements. Ongoing running costs are low: OpenAI's text-embedding-3-small costs $0.02 per million tokens for embeddings; GPT-4o API costs roughly $2.50–$10 per million tokens for inference. A chatbot handling 10,000 queries per month typically costs $50–$200/month in API fees, plus $20–$100/month for a managed vector database like Pinecone.

Kovil AI · AI Chatbot Build

Want to build an AI chatbot for your business?

We build LLM-powered chatbots that are actually useful — connected to your data, trained on your docs, and deployed in your stack. Not a generic off-the-shelf wrapper.